Three Distinctions Higher Ed

Needs to Get Right About AI

Students are arriving on campuses across the country comfortable using AI tools. But that confidence doesn’t necessarily mean they understand how the systems work, or how to use them in ways that support deep learning.

Instead of simply embracing — or banishing — AI tools in the classroom, Carnegie Mellon University faculty are drawing sharp distinctions between simply using AI, understanding its strengths and limitations and designing coursework that teaches effectively alongside it.

Using AI is not the same as understanding it

Students need foundational knowledge about algorithms, data and model limitations, not just the ability to generate answers, said David Touretzky, a research professor in CMU’s School of Computer Science.

In his courses, students are encouraged to use AI to assist with complex programming. But they are responsible for understanding every line of code they submit.

“If a model generates something they don’t understand, I tell them to interrogate it,” Touretzky said of his college robotics students. “Why is this line here? What does it do?”

But students do not need to wait until college to learn the foundations of AI, and if they understand how the tools work, they can use them much more effectively once they get to college. Touretzky co-leads AI4K12.org, which developed national guidelines for K-12 AI education. Through initiatives such as AI4MiddleSchools, young people can learn core ideas behind machine learning and search algorithms — sometimes through creative, hands-on activities, like mapping decision pathways with colored pencils. The goal is not to turn students into specialists early on, but to help them understand the basic ideas.

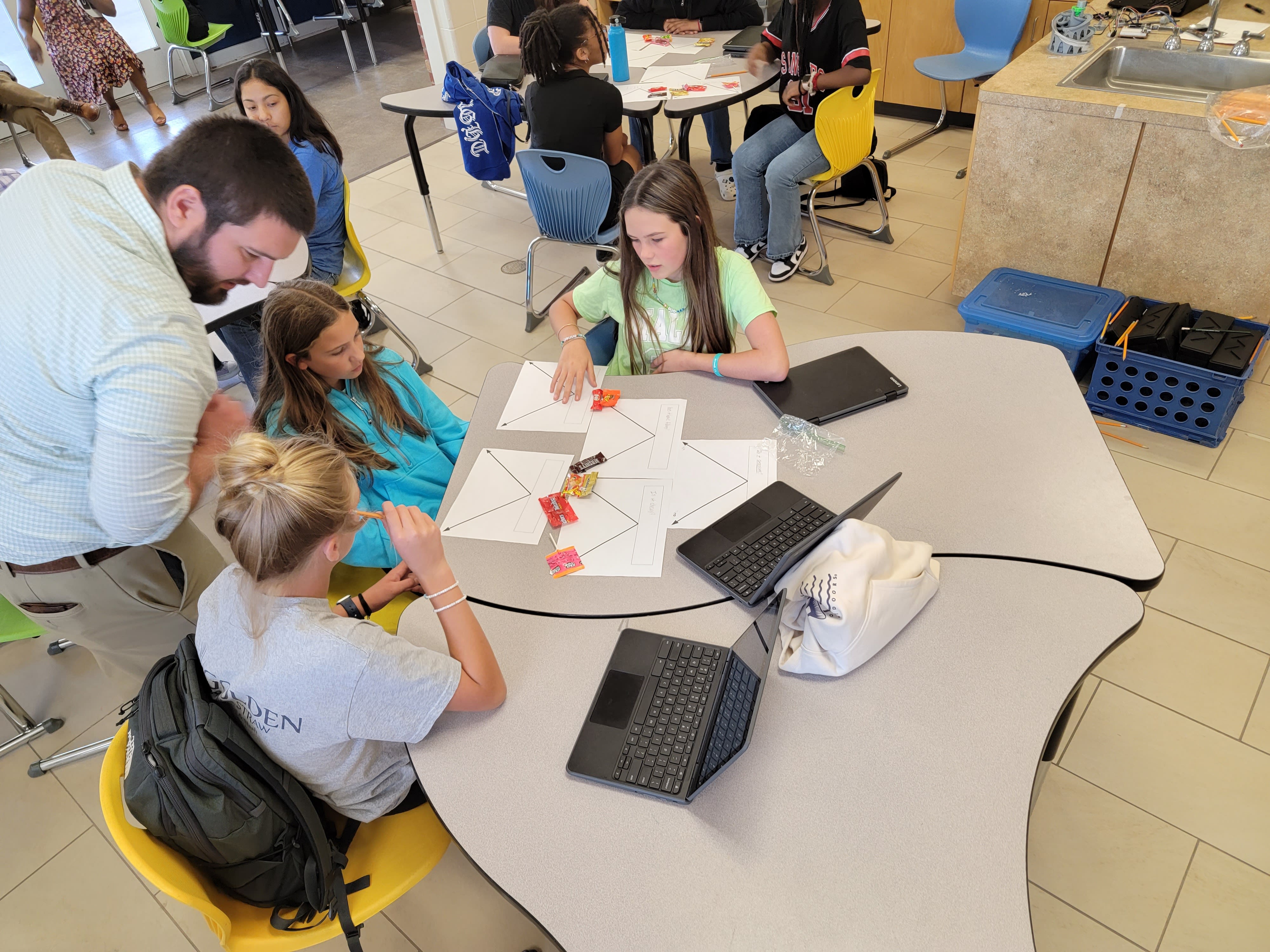

In a Georgia middle school, students participate in "Candy Land," an AI4MiddleSchools activity where they construct decision trees to classify different types of candy, a fundamental lesson in machine learning and automated decision-making.

In a Georgia middle school, students participate in "Candy Land," an AI4MiddleSchools activity where they construct decision trees to classify different types of candy, a fundamental lesson in machine learning and automated decision-making.

Teaching with AI is an opportunity for deeper learning, when done right

Ken Koedinger, a learning scientist and professor at CMU, said AI can accelerate learning, but it can also short-circuit it. Making sure teachers are involved is key — they can help students decide when delegating complex tasks is appropriate and productive.

“There’s a huge opportunity to rethink how students engage in complex activities,” Koedinger said. “Some of the less complex tasks can be offloaded, but exactly where that handoff should occur, and which critical-thinking skills remain essential, that’s the challenge.”

That design question is already shaping college courses.

Koedinger advised on Learnvia, a CMU-backed nonprofit collaborative that provides free, structured, AI-enabled courseware for high-enrollment gateway courses. The effort currently centers on Calculus I, with plans to expand to Quantitative Reasoning, Pre-Calculus, Calculus II and Calculus III over the next three years. Instead of leaving AI use to individual students, the platform embeds structured feedback and support directly into assignments, guided by learning science.

“With Learnvia, students encounter algorithmic systems not only as users but as informed participants,” Koedinger said.

Learning to think alongside AI is a skill students can use in their careers beyond college, too.

“The upside,” Koedinger said, “is if we create new tasks that AI can’t do without human involvement, they’re going to be harder, more complicated and ultimately more useful.”

Judgement is a staple of AI literacy

So how do students know when AI helps — and when it gets in the way?

Haoyong Lan, an engineering faculty librarian at CMU Libraries and AI specialist who teaches a course on generative AI literacy, said the best way to use AI begins with understanding and extends to judgment. By the end of the course, Lan says, students move from seeing AI as a convenient shortcut to understanding it as a powerful but imperfect partner.

“AI literacy is the ability to first understand, and then use generative AI tools effectively and ethically to help with work, learning, and research,” Lan said.

That includes a good understanding of ethics, like when using AI tools violates academic integrity — what constitutes plagiarism, what counts as acceptable assistance, and how AI use intersects with university policies. But it also stretches across the entire research life cycle, from brainstorming research questions to drafting and publishing a paper.

For that, practice makes perfect.

By working hands-on with AI-powered tools embedded in academic databases like Scopus AI and Scite, his students get used to interrogating the tools.

“Examining AI-generated literature reviews and summaries helps students begin to recognize patterns, what an AI-generated synthesis looks and sounds like, and how those outputs are constructed,” he said.

That experience can also help students to know when to spot weaknesses, like when tools “hallucinate,” producing content or citations that do not exist. Learning to spot those weaknesses and to verify claims becomes part of the training.

Across classrooms and disciplines, one message is consistent, Lan said.

“Access to AI isn’t the same as readiness. Students need to understand how these tools work, learn in environments where instructors and technology support each other, and develop the judgment to evaluate what AI produces.”

This content was paid for and created by Carnegie Mellon University. The editorial staff of The Chronicle had no role in its preparation. Find out more about paid content.